AIoT in High-Volume Manufacturing Network

Introduction

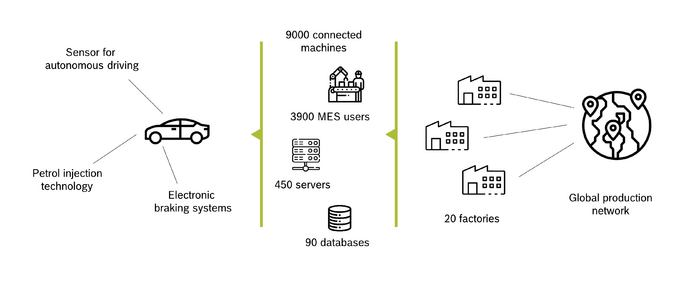

Bosch Chassis Systems Control (CC) is a division of Bosch that develops and manufactures components, systems and functions in the field of vehicle safety, vehicle dynamics and driver assistance. The products from Bosch CC combine cameras, radar and ultrasonic sensors, electric power steering and active or passive safety systems to improve driver safety and comfort. Bosch CC is a global organization with twenty factories around the world. Very high volumes, combined with a high product variety, characterize Bosch CC production. Large numbers of specially designed and commissioned machines are deployed to ensure high levels of automation. Organized in a global production network, plants can realize synergies at scale.

Naturally, IT plays an important role in product engineering, process development, and manufacturing. Bosch CC has more than 90 databases, 450 servers, and 9,000 machines connected to them. A total of 3,900 users are accessing the central MES system (Manufacturing Execution System) to track and document the flow of materials and products through the production process.

Phase I: Data-centric Continuous Improvement

Like most modern manufacturing organizations, Bosch CC strives to continually improve product output and reduce costs while maintaining the highest levels of quality. One of the key challenges of doing this in a global product network is to standardize and harmonize. The starting point for this is actually the machinery and equipment in the factories, especially the data that can be accessed from it. Over the years, Bosch CC has significantly invested in such harmonization efforts. This was the prerequisite for the first data-centric optimization program, which focused on EAI (Enterprise Application Integration, with a focus on data integration), as well as BI (Business Intelligence, with a focus on data visualization). Being able to make data in an easily accessible and harmonized way available to the production staff resulted in a 13% output increase per year in the last five years. The data-centric continuous improvement program was only possible because of the efforts in standardizing processes, machines and equipment and making the data for the 9,000 connected machines easily accessible to staff on the factory floor.

The data-centric improvement initiative mainly focuses on two areas:

- Descriptive Analytics (Visual Analytics): What happened?

- Diagnostic Analytics (Data Mining): Why did it happen?

The program is still ongoing, with increasingly advanced diagnostic analytics.

Phase II: AI-centric Continuous Improvement

Building on the data-centric improvement program is the next initiative, the AI-centric Continuous Improvement Program. While the first wave predominantly focused on gaining raw information from the integrated data layer, the second wave applied AI and Machine Learning with a focus on:

- Predictive Analytics (ML): What will happen?

- Prescriptive Analytics (ML): What to do about it?

As of the time of writing, this new initiative has been going on for two years, with the first results coming out of the initial use cases, supporting the assumption that this initiative will add at least a further 10% in annual production output increase.

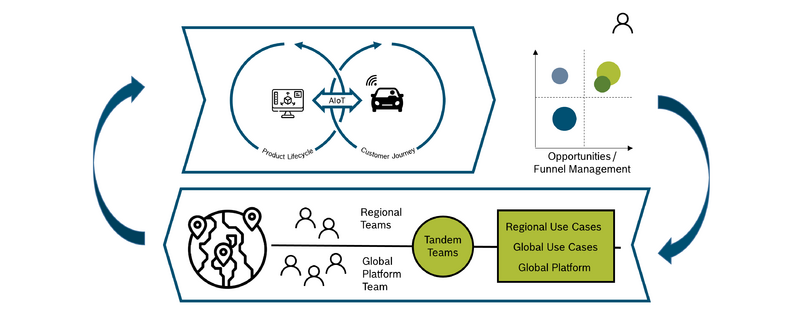

Closed-Loop Optimization

The approach taken by the Bosch CC team fully supports the Bosch AIoT Cycle, which assumes that AI and IoT support the entire product lifecycle, from product design over production setup to manufacturing. The ability to gain insights into how products are performing in the field provides an invaluable advantage for product design and engineering. Closing the loop with machine building and development departments via AI-gained insights enables the creation of new, more efficient machines, as well as product designs better suited to efficient production. Finally, applying AI to machine data gained via the IoT enables root cause analysis for production inefficiencies, optimization of process conditions, and bad part detection and machine maintenance requirement predictions.

Program Setup

A key challenge of the AI-driven Continuous Improvement program is that the optimization potential cannot be found in a single place, but is rather hidden in many different places along the engineering and manufacturing value chain. To achieve the goal of a 10% output increase, hundreds of AIoT use cases have to be identified and implemented every year. This means that this process has to be highly industrialized.

To set up such an industrialized approach, the program leadership identified a number of success factors, including:

- Establishment of a global team to coordinate the efforts across all factories in the different regions and provide centralized infrastructure and services

- Close collaboration with the experts in the different regions, working together with experts from the global team in so-called tandem teams

The global team started by defining a vision and execution plan for the central AIoT platform, which combines an AI pipeline with central cloud compute resources as well as edge compute capabilities close to the lines on the factory floors.

Next, the team started to work with the regional experts to identify the most relevant use cases. Together, the global team and regional experts prioritize these use cases. The central platform is then gradually advanced to support the selected use cases. This ensures that the platform features always support the needs of the use case implementation teams. The tandem teams consist of central platform experts as well as regional process experts. Depending on the type of use case, they include Data Analysts, and potentially Data Scientists for the development of more complex models. Data Engineers support the integration of the required systems, as well as potentially required customization of the AI pipeline. The teams strive to ensure that the regionally developed use cases are integrated back into the global use case portfolio so that they can be made accessible to all other factories in the Bosch CC global manufacturing network.

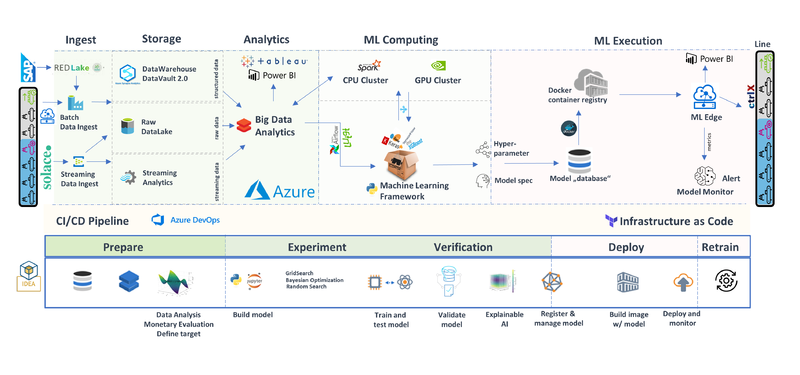

AIoT Platform and AI Pipeline

The AIoT platform being built by Bosch CC combines traditional data analytics capabilities with advanced AI/ML capabilities. The data ingest layer integrates data from all relevant data sources, including MES and ERP. Both batch and real-time ingest are supported. Different storage services are available to support the different input types. The data analytics layer is running on Microsoft Azure, utilizing Tableau and Power BI for visual analytics. For advanced analytics, a machine learning framework is provided, which can utilize dedicated ML compute infrastructure (GPUs and CPUs), depending on the task at hand. The trained models are stored in a central model repository. From there, they can be deployed to the different edge nodes in the factories. Local model monitoring helps to gain insights into model performance and support alerting. The AI pipeline supports an efficient CI/CD process and allows for automated model retraining and redeployment.

Expert Opinions

In the following interview with Sebastian Klüpfel (Bosch CC central AI platform team) and Uli Homann (Corporate Vice President at Microsoft and a member of the AIoT Editorial Board), some insights and lessons learned were shared.

Dirk Slama: Sebastian, how did you get started with your AI initiative?

Sebastian Klüpfel: Since 2000, we have been working on the standardization and connection of our manufacturing lines worldwide. Our manufacturing stations provide a continuous flow of data. For each station, we can access comprehensive information about machine conditions, quality-relevant data and even individual sensor values. By linking the upstream and downstream production stages on a data side, we achieve a perfect vertical connection. For example, for traceability reasons we store 2,500 data points per ABS/ESP part (ABS: Anti-Locking Brake, ESP: Electronic Stability Program). We use these data as the basis for our continuous improvement process. Thus, in the ABS/ESP manufacturing network, we were able to increase the production rate by 13% annually over the last 18 years. All of this was accomplished just by working on the lower part of the I4.0 pyramid: the data access/information layer.

DS: Could you please describe the ABS/ESB use case in more detail?

SK: This was our first use case. Our first use case for AI in manufacturing is at the end of the ABS/ESP final assembly, where all finished parts are checked for functionality. To date, during this test process, each part has been handled and tested separately. Thus, the test program did not recognize whether a cause for a bad test result was a real part defect or just a variation in the testing process. As a result, we started several repeated tests on bad tested parts to ensure that the part was faulty. A defective was thus tested up to four times until the final result was determined. This reduced the output of the line because the test bench was operating on its full capacity by repeated tests. By applying AI, we reduce the bottleneck at the test bench significantly. Based on the first bad test result, the AI can detect whether a repeated test is useful or not. In case of variations in the testing process, we start a second test, and thus, we save the right part as a “good part”. On the other hand, defective parts are detected and immediately rejected. Thus, unnecessary repeated checks are avoided. The decision of whether a repeated test makes sense is made by a neuronal net, which is trained on a large database and is operated closely to the line. The decision is processed directly on the machine. This was the first implementation of a closed loop with AI in our production. Our next AI use case was also in the ABS/ESP final assembly. Here we have recognized a relation between bad tested parts at the test bench and the caulking process at the beginning of the line. With AI, we can detect and discharge these parts at the beginning of the line, before we install high-quality components such as the engine and the control unit.

DS: What about the change impact on your organization and systems?

SK: With the support from our top management, new roles and cooperation models were established. The following roles were introduced and staffed to implement AI use cases:

- Leadership: management and domain leaders need to understand strategic relevance, advantages, limits, and an overview of tools.

- Citizen Data Scientist: work on an increasingly data-driven field and uses analytical tools applied to its domain. Therefore, a basic understanding and knowledge of Big Data and machine learning is necessary.

- Data Engineer: builds Big Data systems and knows how to connect to these systems and machine learning tools. Therefore, deep knowledge of IT systems and development is necessary.

- Data Scientist: develops new algorithms and methods with deep knowledge in existing methods. Therefore, the data scientist must be up-to-date and have know-how in the CRISP-DM analytics project lead.

We want to use the acquired data in combination with AI with the maximum benefit for the profitability of our plant (data-driven organization). Only through the interaction and change in the working methods of people, machines and processes/organization can we create fundamentally new possibilities to initiate improvement processes and achieve productivity increases. We rely on cross-functional teams from different domains to guarantee quick success.

DS: What were the major challenges you were facing?

SK: For the implementation of these and future AI use cases, we rely on a uniform architecture. Without this architecture, the standardized and industrialized implementation of AI use cases is not possible. The basis is the detailed data for every manufacturing process, which are acquired from our standardized and connected manufacturing stations. Since 2000, we have implemented an MES (Manufacturing Execution System) that enables holistic data acquisition. The data is stored in our cloud (Data Lake). As a link between our machines and the cloud, we use proven web standards. After an intensive review of existing cloud solutions internally and externally, we decided to use the external Azure Cloud from Microsoft. Here, we can use as many resources as we need for data storage, training of AI models and preprocessing of data (Data Mart). We also scale financially, and we only create costs where we have a benefit. Thus, we can also offer the possibility to analyze the prepared data of our Data Mart via individually created evaluations and diagrams (Tableau, PowerBI). We run our trained models in an edge application close to our production lines. By using this edge application, we bring the decisions of the AI back to the line. For the connection of the AI to the line, only minimal adjustments to the line are necessary, and we guarantee a fast transfer of new use cases to other areas.

DS: How does your ROI look like for the first use cases?

SK: Since an AI decides on repeated tests on the testing stations of the ABS/ESP final assembly, we can detect 40% of the bad parts after the first test cycle. Before introducing the AI solution, the bad parts always went through four test cycles. Since the test cells are the bottleneck stations on the line, the saved test cycles can increase the output of the line, reduce cycle time, increase quality and reduce error costs. This was proven on a pilot line. The rollout of the AI solution offers the potential for an increase in output of approximately 70 000€ per year and a cost reduction of nearly 1 million (since no additional test stations are needed to be purchased for more complex testing). This was only the beginning. With our standardized architecture, we have the foundation for a quick and easy implementation of further AI use cases. By implementing AI in manufacturing, we expect an increase in productivity of 10% in the next five years.

DS: What are the next steps for you?

SK: Our vision is that in the future, we will fully understand all cause-effect relations between our product, machine and processes and create a new way of learning with the help of artificial intelligence to assist our people in increasing the productivity of our lines. To achieve this, the following steps are planned and are already in progress:

- Pioneering edge computing: First, we are working on a faster edge application. We have to bring the decisions from AI even faster to the manufacturing station. For the first use cases, our edge-application is still sufficient. However, for AI use cases for short-cycle assembly lines (approximately 1 second cycle time), the actual edge solution is no longer sufficient. Here, we are already working on solutions to deliver the predictions back to the line within fractions of a second even for such use cases.

- Automated machine learning: 80% of our data are already preprocessed automatically. Our target is to further increase the automation rate. In addition, we have ideas how to automate the selection of the right ML model with an appropriate hyper parameter search. Of course, we are also working on an automated analysis of ML decisions to monitor the health status of models in production.

- Implement more use cases: Our architecture is designed for thousands of AI use cases. We have to identify and implement these. By doing so, we ensure that we do not implement show cases. We want to implement real use cases for our Digital Factory, including:

- Predict process parameters: learn optimal process parameters from prior processes (e.g. prior to final assembly)

- Adaptive tolerances

- Bayesian network: We want to train a Bayesian network on all parameters of the HU9 final assembly. This means that influences and relations can be read from the graph. Relations are much deeper than pairwise correlations.

DS: What are the key lessons learned thus far?

SK: For a continuous improvement program like ours, KI must be industrialized: we have been proven right with our approach to make AI applicable on a large scale instead of individual “lighthouse projects”, which are not easily adaptable to other use cases. Another key success factor is the standardized architecture: by storing all the data in a cloud, we can ensure a holistic view of the data across all factories in our global manufacturing network and use this view to train the models centrally with all the required data at hand. Next, we can take the trained models and deploy them on the edge layer as close to the actual production lines as possible. To work quickly and efficiently on the implementation of AI use cases, new competence and work models must be established. The most important thing is that digital transformation must be a part of corporate strategy. After all, digital transformation can succeed only if all employees work together toward the path of a data-driven organization.

DS: Uli, what is your perspective on this?

Uli Homann: In this project, Bosch shows first-hand how to streamline operations, cut costs, and accelerate innovation across the entire product life-cycle by driving change holistically across culture, governance, and technology. Through partnership with Microsoft, Bosch is unlocking the convergence of OT and IT. The holistic approach provided by the Digital Playbook enables a continuous feedback loop that helps teams turn AI-driven data insights into business value at scale.

DS: Thank you, both!