AIoT Data and Functional Viewpoint

The Data and Functional Viewpoint provides design details that focus on the overall functionality of the product or solution, as well as the underlying data architecture. The starting point can be a refinement of the AIoT Solution Sketch. A better understanding of the data architecture can be achieved with a basic data domain model. The component and API landscape is the initial functional decomposition, and will have a huge impact on the implementation. The optional Digital Twin landscape helps understand how Digital Twin as a concept fit into the overall design. Finally, AI Feature Mapping helps identify which features are best suited to an implementation with AI.

AIoT Solution Sketch

The AIoT Solution Sketch from the Business Model can be refined in this perspective, adding more layers of detail, in a slightly more structured process of presentation. The solution sketch should include the physical asset or project (the robovac in our example), as well as other key assets (e.g., the robovac charging station) and key users. Since interactions between the physical assets and the back end are key, they should be listed explicitly. The sketch should also include an overview of key UIs, the key business processes supported, the key AI and analytics-related elements, the main data domains, and external databases or applications.

Data Domain Model

The Data Domain Model should provide a high-level overview of the key entities of the product design, including their relationships to external systems. The Domain Model should include approximately a dozen key entities. It does not aim to provide the same level of detail as a thorough data schema or object model. Instead, it should serve as the foundation for discussing data requirements between stakeholders, across multiple stakeholder groups in the product team.

For example, the main data domains that have been identified for the ACME:Vac product are the customer, the robovac itself, floor maps and cleaning data. Each of these domains is described by listing the 5-10 key entities within. This is typically a good level of detail: sufficiently meaningful for planning discussions, without getting lost in detail.

The design team must make a decision on whether the required data from an AI perspective should already be included here. This can make sense if AI-related data also play an important role in other, non AI-based parts of the system. In this case, potential dependencies can be identified and discussed here. If the AI has dedicated input sources (e.g., specific sensors that are only used by the AI), then it is most likely more interesting at this point what kind of data or information is provided by the AI as an output.

Component and API Landscape

To manage the complexity of an AIoT-enabled product or solution, the well-established approach of functional decomposition and componentization should be applied. The results should be captured in a high-level component landscape, which helps visualize and communicate the key functional components.

Functional decomposition and componentization

Functional decomposition is a method of analysis that breaks a complex body of work down into smaller, more easily manageable units. This “Divide & Conquer” strategy is essential for managing complexity. Especially if the body of work cannot be implemented by a single team, splitting the work in a way that it can be assigned to different teams in a meaningful way becomes very important. Since a system's architectural structure tends to be a mirror image of the organizational structure, it is important that team building follows the functional decomposition process. The idea of "feature teams" to support this is discussed in the AIoT Product Viewpoint.

Another key point of functional decomposition is functional scale: without effective functional decomposition and management of functional dependencies, it will be difficult to build a functionally rich application. It does not stop at building the initial release. Most modern software-based systems are built on the agile philosophy of continuous evolution. While an AIoT-enabled product or solution does not only consist of software -- it also includes AI and hardware -- enabling evolvability is usually a key requirement. Functional decomposition and componentization will enable the encapsulation of changes and thus support efficient system evolution.

The logical construct for encapsulating key data and functionality in an AIoT system should be the component. From a functional viewpoint, components are logical constructs, independent of a particular technology. Later in the implementation viewpoint, they can be mapped to specific programming languages, AI platforms, or even specific functionality implemented in hardware. Additionally, component frameworks such as microservices can be added where applicable.

The functional decomposition process should go hand in hand with the development of the agile story map (see AIoT Product Viewpoint) since the story map will contain the official definition of the body of work, broken down into epics and features.

The first iteration of the component landscape can actually be very close to the story map, since it should truly only focus on the functional decomposition. In a second iteration, the logical AIoT components must be mapped to a distributed component architecture. This perspective is actually somewhat between the functional and implementation viewpoints. The mapping to the distributed component architecture must take a number of different aspects into consideration, including business/functional requirements, cost constraints, technical constraints and architectural constraints.

A key functional requirement simply is availability. In a distributed system, remote access to a component always has a higher risk of the component not being available, e.g., due to connectivity issues. Other business-driven aspects are organizational constraints (especially if different parts of the distributed system are developed by different organizational units), physical control and legal aspects (deploying critical data or functionality in the field or in certain countries can be difficult). This is also closely related to data ownership and data sharing requirements.

Achim Nonnenmacher, expert for Software-defined Vehicle at Bosch comments: Because of the availability issues related to distributed applications, many leading services are using a capability-based architecture. For example, many smart phones have two versions of their key services - one which works with the data and capabilities available on the phone, and one which works only with cloud connectivity. For example, you can say "Hey, bring me home", and the offline phone will still be able to provide rudimentary voice recognition and navigation services using the AI and map data on the phone. Only if the phone is online will it be able to make full use of better cloud-based AI and data. We still have to learn in many ways how to apply this to the vehicle-based applications of the future, but this will be important.

Another key distribution aspect is cost constraints. For example, many manufacturers of physical products have to ensure that the unit costs are kept to a minimum. This can be an argument for deploying computationally intensive functions not on the physical product but rather on a cloud back end, which can better distribute loads coming from a large number of connected products. Similar arguments apply to communication costs and data storage costs.

Furthermore, the distributed architecture will be greatly influenced by technical constraints, such as latency (the minimum amount of time for a single bit of data to travel across the network), bandwidth (data transfer capacity of the network), performance (e.g., edge vs. cloud compute performance), time sensitivity (related to latency), and security.

Finally, a number of general aspects should be considered from the architectural perspective. For example, the technical target platform (e.g., software vs. AI) will play a role. Another factor is the different speed of development: a single component should not combine functionalities that will evolve at significantly different speeds. Similarly, one should avoid combining functionality, which only requires standard Quality Management with functionality, which must be treated as functional safety relevant to a single component. In this case, the QM functionality must also be treated as functional safety relevant, making it costliest to test, maintain and update.

While some of these constraints and considerations are of a more technical nature, they need to be combined with more business or functional considerations when designing the distributed component architecture.

Component Landscape

The result of the functional decomposition process should be a component landscape, which focuses on functional aspects but already includes a high-level distribution perspective.

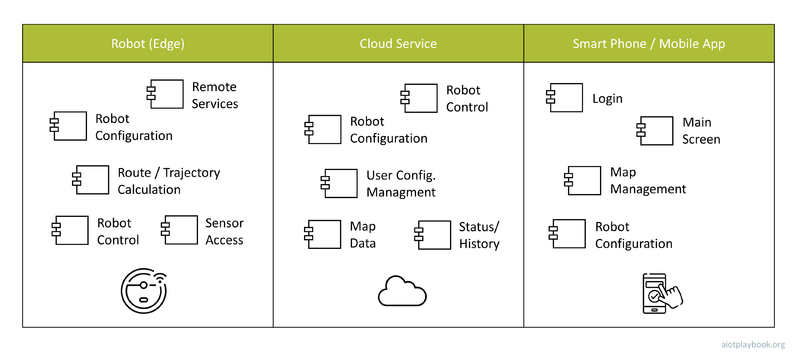

The example shown here is the high-level component landscape for the ACME:Vac product. This component landscape has three swimlanes: one for the robovac (i.e., the edge platform), one for the main cloud service, and one for the smartphone running the ACME:Vac mobile app. The components of the robot include basic robot control and sensor access, as well as the route/trajectory calculation. These components would most likely be based on an embedded platform, but this level of detail is omitted from a functional viewpoint. In addition, the robot will have a configuration component, as well as a component offering remote services that can be accessed from the robot control component in the cloud.

In addition, the cloud contains components for robot configuration, user configuration management, map data, and the management of the system status and usage history. Finally, the mobile app has a component to manage the main app screen, map management, and remote robot configuration.

API Management

In his famous "API Mandate", Jeff Bezos -- CEO of Amazon at the time -- declared that "All teams will henceforth expose their data and functionality through service interfaces." at Amazon. If the CEO of a major companies gets involved on this level, you can tell how important this topic is.

APIs (Application Programming Interfaces) are how components make data and functionality available to other components. Today, a common approach is so-called RESTful APIs, which utilize the popular HTTP internet protocol. However, there are many different types of APIs. In AIoT, another important category of APIs is between the software and the hardware layer. These APIs are often provided as low-level c APIs (of course, any c API can again be wrapped in a REST API and exposed to remote clients). Most APIs support a basic request/response pattern to enable interactions between components. Some applications require a more message-oriented, de-coupled way of interaction. This requires a special kind of API.

Regardless of the technical nature of the API, it is good practice to document APIs via an API contract. This contract defines the input and output arguments, as well as the expected behavior. "Interface first" is an important design approach which mandates that before implementing a new application component, one should first define the APIs, including the API contract. This approach ensures de-coupling between component users and component implementers, which in turn reduces dependencies and helps managing complexity. Because APIs are such an important part of modern, distributed system development, they should be managed and documented as key artefacts, e.g. using modern API management tools which support API documentation standards like OpenAPI.

From the system design point of view, the component landscape introduced earlier should be augmented with information about the key APIs supported by the different components. For example, the component landscape documentation can support links to the detailed API documentations in different repositories. This way, the component landscape provides a high level description not only of how data and functionality is clustered in the system, but also how it can be accessed.

Digital Twin Landscape

As introduced in the Digital Twin 101 section, using the Digital Twins concept can be useful, especially when dealing with complex physical assets. In this case, a Digital Twin Landscape should be included with the Data/Functional Viewpoint. The Digital Twin Landscape should provide an overview of the key logical Digital Twin models and their relationships. Relationships between Digital Twin model elements can be manifold. They should be used to help define the so-called ""knowledge graph"" across different, often heterogeneous data sources used to construct the Digital Twin model.

In some cases, the implementation of the Digital Twin will rely on specific standards and Digital Twin platforms. The Digital Twin Landscape should keep this in mind and only use modeling techniques that will be supported later by the implementation environment. For example, the Digital Twins Definition Language (DTDL) is an open standard supported by Microsoft, specifically designed to support modeling of Digital Twins. Some of the rich features of DTDL include Digital Twin interfaces and components, different kinds of relationships, as well as persistent properties and transient telemetry events.

In the example shown here, these modeling features are used to create a visual Digital Twin Landscape for the ACME:Vac example. The example differentiates between two types of Digital Twin model elements: system (green) and environment (blue). The system elements relate to the physical components of the robovac system. Environment elements relate to the environment in which a robovac system is actually deployed.

This differentiation is important for a number of reasons. First, the Digital Twin system elements are known in advance, while the Digital Twin environment elements actually need to be created from sensor data (see the discussion on Digital Twin reconstruction).

Second, while the Digital Twin model is supposed to provide a high level of abstraction, it cannot be seen completely in isolation of all the different constraints discussed in the previous section. For example, not all telemetry events used on the robot will be directly visible in the cloud. Otherwise, too much traffic between the robots and the cloud will be created.

This is why the Digital Twin landscape in this example assigns different types of model elements to different components. In this way, the distributed nature of the component landscape is taken into consideration, allowing for the creation of realistic mapping to a technical implementation later on.

AI Feature Mapping

The final element in the Data/Functional Viewpoint should be an assessment of the key features with respect to suitability for implementation with AI. As stated in the introduction, a key decision for product managers in the context of AIoT will be whether a new feature should be implemented using AI, Software, or Hardware. To ensure that the potential for the use of AI in the system is neither neglected nor overstated, a structured process should be applied to evaluate each key feature in this respect.

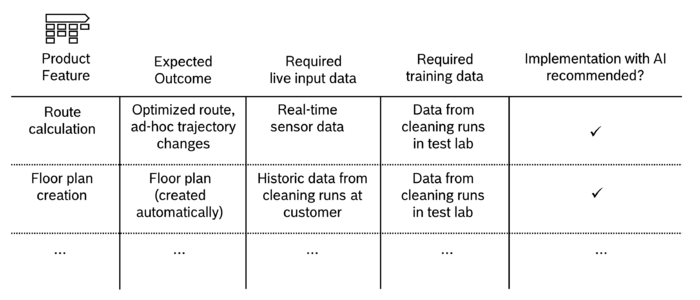

In the example shown here, the features from the agile story map (see AIoT Product Viewpoint) are used as the starting point. For each feature, the expected outcome is examined. Furthermore, from an AI point of view, it needs to be understood which live data can be made available to potentially AI-enabled components, as well as which training data. Depending on this information, an initial recommendation regarding the suitability of a given feature for implementation with AI can be derived. This information can be mapped back to the overall component landscape, as indicted by the figure below. Note also that a new component for cloud-based model training is added to this version of the component landscape. Not that this level of detail does not describe, for example, details of the algorithms used, e.g. Simultaneous Localization and Mapping (SLAM), etc.

Authors and Contributors

|